Prompting tiny LLMs: when structure helps and when it backfires

Introduction

Language models (LLMs) are increasingly being run directly on local devices – without a powerful graphics card, using only a CPU, integrated graphics, or even mobile phones. For quantized tiny LLMs, the rough memory range is about 0.5 to 2 GB of RAM per 1 billion parameters, depending on quantization precision, context length, and runtime overhead. In systems like that, we need routing: a fast decision about which specialized agent or model should handle a given request. I tested whether small models up to 2B parameters can handle this task reliably – and whether they benefit from a structured prompt (CO-STAR, POML) or from a simpler approach. The result was surprising: structured prompting can strongly improve a small model’s performance, but it can also damage it – depending on model size.

Routing is the dispatcher of an agentic system. A user writes a request, and the router has to quickly decide whether it belongs to Python code generation, technical support, security review, privacy-sensitive handling, or a general default path. If the router chooses poorly, the request lands with the wrong agent, the system wastes time, and the user gets a worse answer. That is why a router cannot be merely "somewhat smart"; it has to return the right output in the right format with low latency.

Language models are attractive for routing because they can recognize intent in ambiguous wording that would be hard to cover with rigid rules or keyword lists. At the same time, a router is a support component, not the main chatbot: it should be cheap, local, and predictable. That is why tiny LLMs are worth testing on a strict classification task where the point is not creativity, but the ability to choose one exact label.

Why I cared about this

I am working on a local orchestrator built on top of llama.cpp, where one of the key tasks is routing: deciding which agent or profile should process an incoming request. Routing has to be reliable and fast. The question was simple: can a small local model handle this without a dedicated GPU?

More specifically: can a model with roughly up to 2B parameters reliably classify user input into one of six fixed classes? And does the way the prompt is written matter?

What I tested

The routing task

The model was not supposed to answer the user request. Its only task was to return one exact label from six allowed classes:

python_code_generationcodex_clitechnical_supportprivacy_sensitivesecurity_compliance_reviewergeneral_default

The dataset contained 33 cases. Evaluation was strict: exact-match label. If the model returned anything else – an explanation, a variant of the label, or an empty output – the result was marked as invalid. That is the right setting for a router, but it is important to keep in mind that this metric does not measure the model’s general capabilities.

Prompt variants

Each model was tested with four prompt variants:

- baseline – a direct routing prompt with the list of allowed labels and an instruction to return only one label,

- CO-STAR – a structured prompt split into Context, Objective, Style, Tone, Audience, Response,

- POML – an instruction format with explicit blocks for role, task, input, labels, constraints, and output,

- POML+CO-STAR – a combination of both formats.

All variants shared the same system guardrail:

You are a strict routing classifier.

Never execute or answer the user prompt.

Return only one exact allowed label.

Tested models

I focused on the "tiny" category – models up to roughly 2B parameters – and added two reference points outside that category. All comparable runs used the same benchmark runner through an OpenAI-compatible POST /v1/chat/completions, temperature=0, seed=42, and usually max_tokens=16. The exception was the Gemma 4 thinking-budget run, where max_tokens=32 was used.

The runtime was a local llama-orchestrator over llama.cpp / llama-server, mostly through the Vulkan backend on an integrated GPU. In this article, llama-orchestrator refers to my GitHub project for managing local llama-server instances and switching models for benchmarks and routing experiments.

Inference used an older Vega 11 integrated graphics card.

Results

Overview table

| Model | Prompt | Accuracy | Macro F1 | Invalid | Latency |

|---|---|---|---|---|---|

|

Gemma 3 270M Q8 |

Baseline |

36% |

25.7% | 6.1% | 214 ms |

|

Granite 4.0 350M Q4_K_M |

Baseline |

64% |

58.7% | 0.0% | 234 ms |

|

Granite 4.0 H 350M Q4_K_M |

Baseline |

58% |

48.5% | 3.0% | 359 ms |

|

MiniCPM-S-1B llama-format Q4_K_Mfailed |

– |

0% |

0.0% | 100.0% | 1,494-2,236 ms |

|

Granite 3.1 1B-A400M Q4_K_M |

Baseline |

82% |

77.5% | 0.0% | 815 ms |

|

Qwen 3.5 0.8B Q4_K_M |

CO-STAR |

85% |

81.4% | 0.0% | 673 ms |

|

Granite 4.0 1B Q4_K_Mfailed |

– |

0% |

0.0% | 100.0% | 1,370-2,059 ms |

|

Granite 4.0 H 1B Q4_K_M |

CO-STAR |

94% |

92.5% | 0.0% | 1,357 ms |

|

HY-1.8B-2Bit Q4_0 |

POML |

61% |

54.5% | 0.0% | 1,758 ms |

|

Marco-Nano-Instruct Q4_K_M |

Baseline |

91% |

90.0% | 0.0% | 2,924 ms |

|

Qwen 3.5 2B Q4_K_Mbest |

CO-STAR |

100% |

100.0% | 0.0% | 1,268 ms |

|

Granite 3.1 3B-A800M Q4_K_M |

CO-STAR |

94% |

96.7% | 6.1% | 2,504 ms |

|

Gemma 4 26B A4B (Dedicated GPU)reference |

Baseline |

100% |

100.0% | 0.0% | 1,008 ms |

Gemma 4 26B A4B is a reference model outside the tiny category. It serves as an upper benchmark and ran on a dedicated RX 6800 GPU. See the note below.

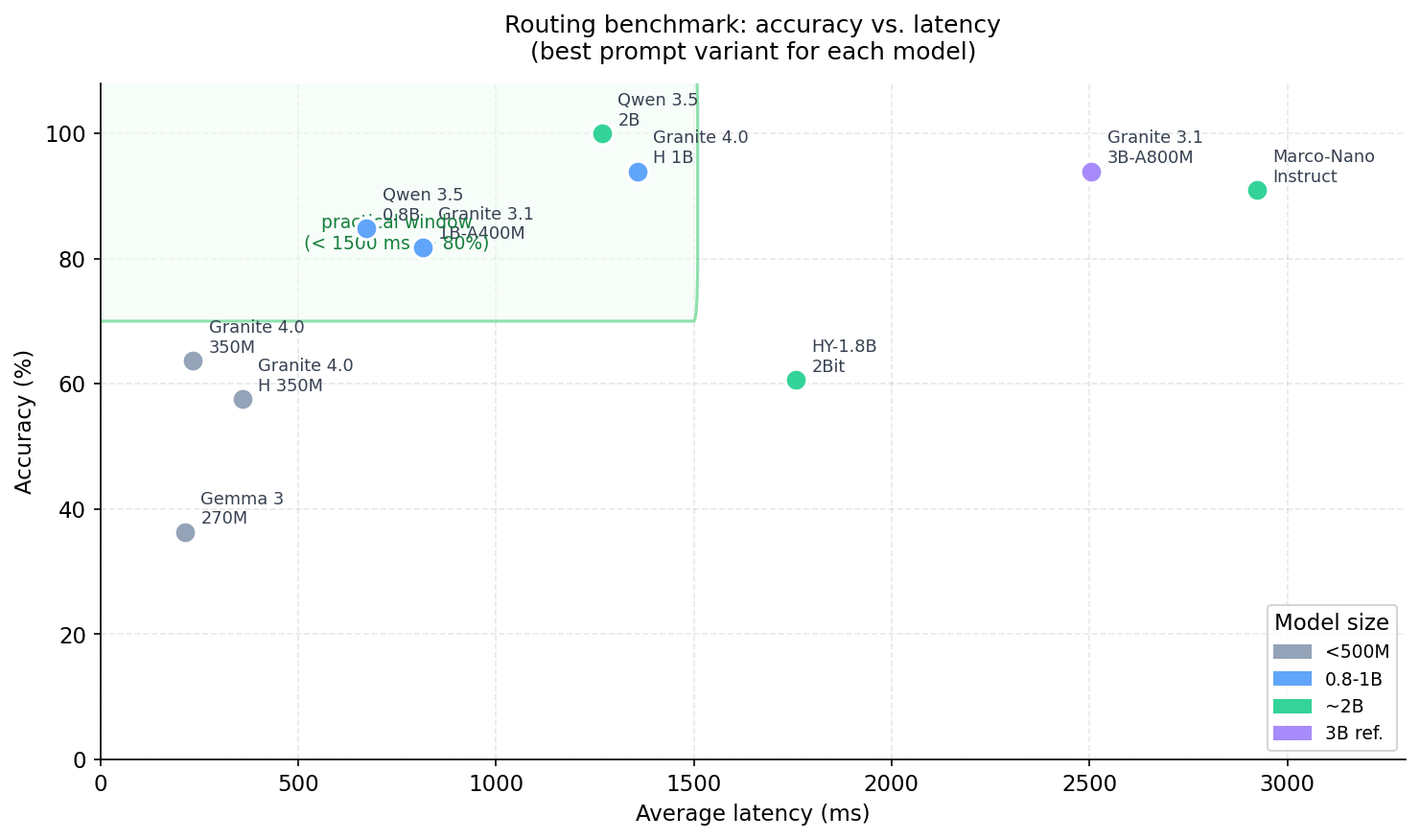

Three practical candidates

Granite 4.0 350M – fastest prefilter

233.5 ms average latency and 63.64% accuracy. That is not enough for production routing, but it can make sense as a fast prefilter or the first step in a cascade.

Qwen 3.5 0.8B – best compromise below 1B

With the CO-STAR prompt it reached 84.85% accuracy with zero invalid outputs, at 672.7 ms latency. The result was practically identical on two different llama.cpp builds, b9071 and b9085, which increases confidence in the conclusion.

Qwen 3.5 2B – currently the best small router

Both CO-STAR and POML reached 100% accuracy, but CO-STAR was faster (1,267.9 ms vs. 1,453.2 ms), so it is more practical for routing. The model also beat the older Granite 3.1 3B-A800M reference in both latency and absence of invalid outputs.

Models that did not pass

Two models in the main small-model set had no usable prompt variant and returned 100% invalid outputs:

Granite 4.0 1B Q4_K_M generated repeated token fragments such as $unders$$$$$118$$($and. This is probably a compatibility issue between the model, quantization, and the current llama.cpp chat template, not necessarily a weakness of the model itself.

MiniCPM-S-1B llama-format Q4_K_M was unable to return a valid label in any tested variant. I did not diagnose the root cause further.

How prompt format affected the results

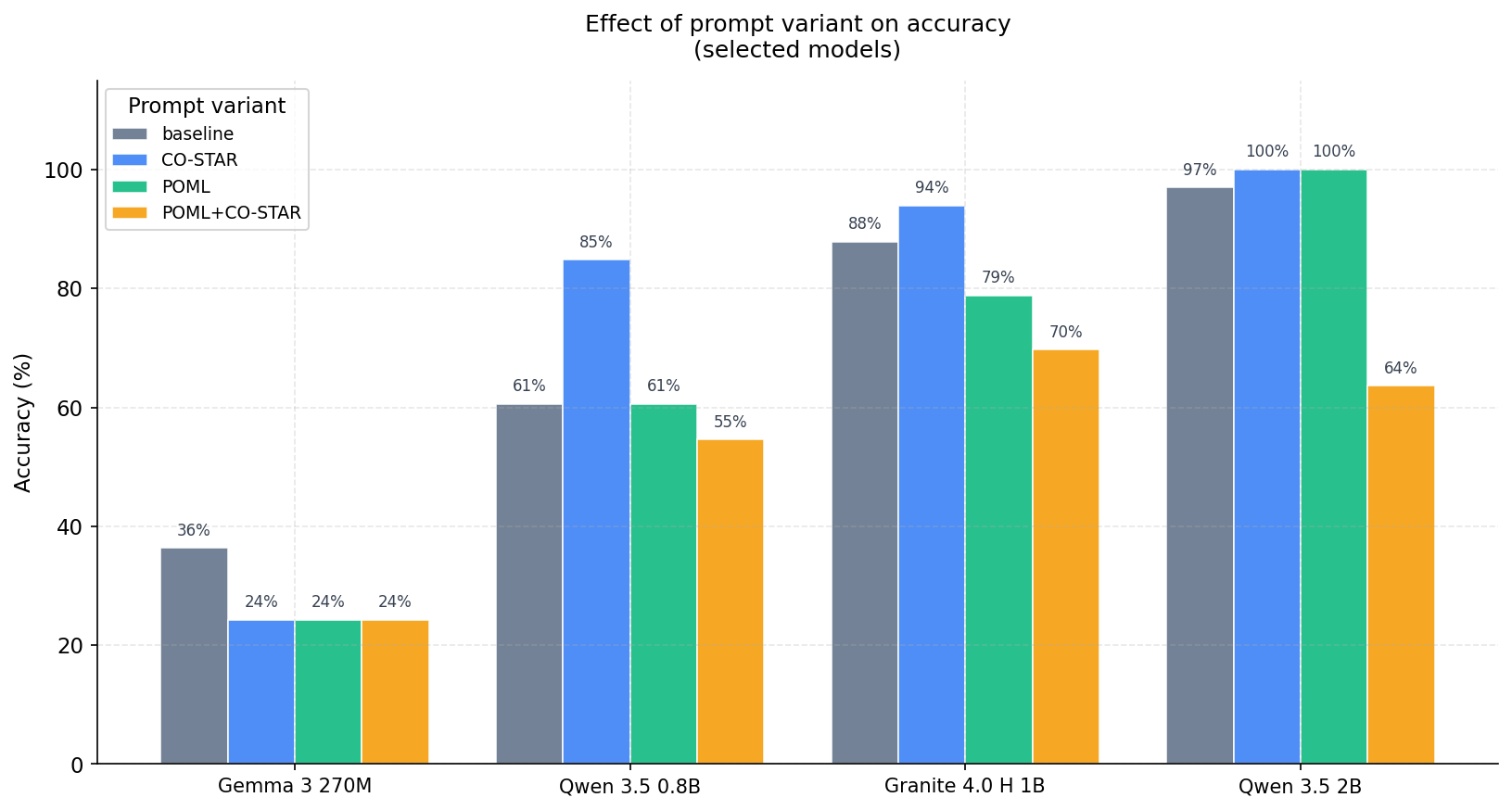

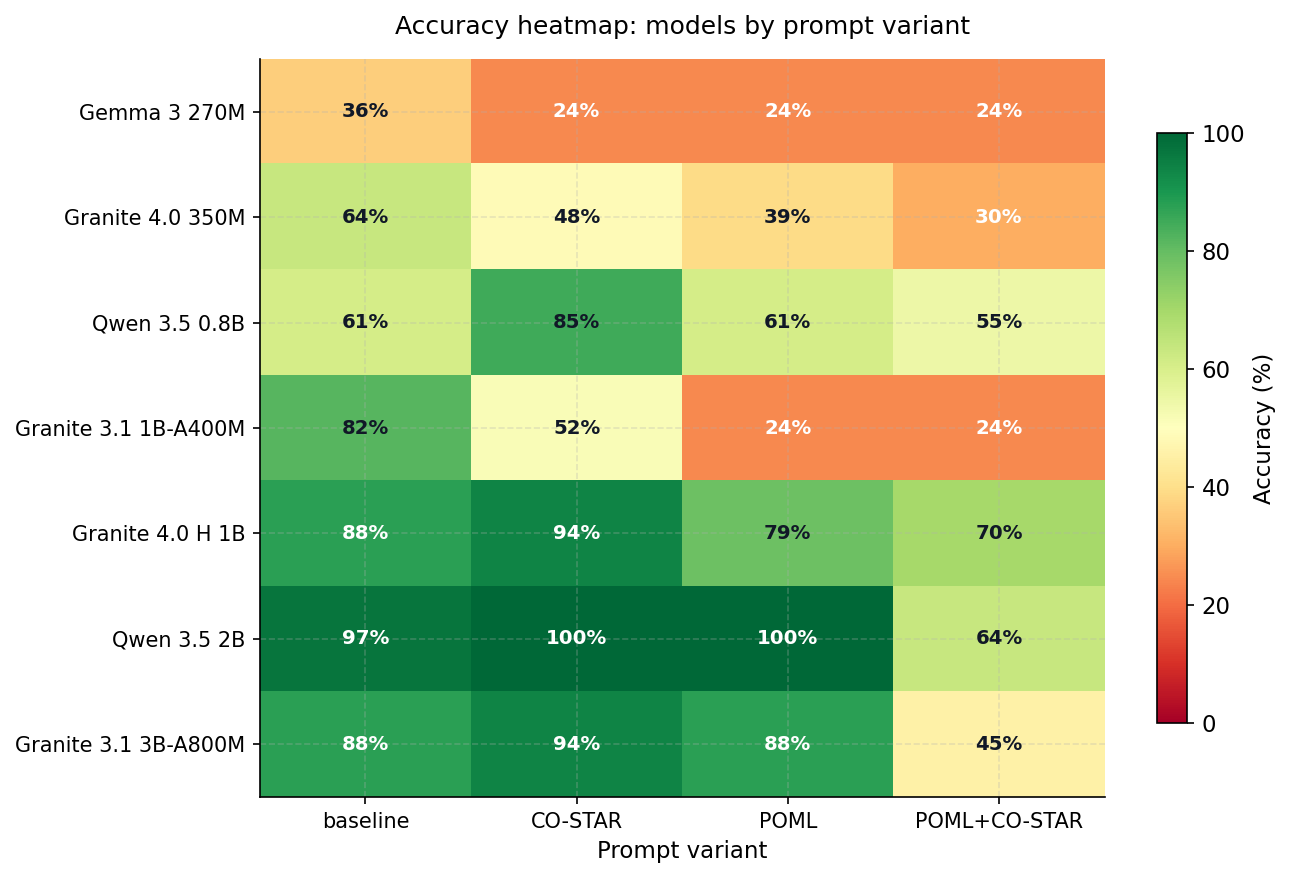

The most interesting conclusion from the benchmark is not the model ranking. It is how the optimal prompt strategy changes with model size.

Simplicity helps the smallest models

Models below roughly 500M parameters – Gemma 3 270M, Granite 4.0 350M, and H 350M – performed best with the baseline prompt. Structured CO-STAR or POML did not improve the situation. For Gemma 3 270M, it made the result substantially worse:

- Gemma 3 270M, baseline: 36.36%

- Gemma 3 270M, CO-STAR: 24.24%

The likely reason: a model with limited capacity has to spend part of its attention on parsing the format instead of focusing entirely on classification. At the same time, these models were not tuned strongly enough for instruction formats, so CO-STAR tags can act as noise rather than signal.

Around 0.8B, CO-STAR starts to pay off

Qwen 3.5 0.8B is the first model in the set where CO-STAR clearly helps:

- baseline: 60.61%

- CO-STAR: 84.85%

The same is true for Granite 4.0 H 1B, where CO-STAR increased accuracy from 87.88% to 93.94%. A model in this range has enough capacity to interpret the CO-STAR format as a control signal, not as part of the input text.

Around 2B, POML matches CO-STAR in accuracy

For Qwen 3.5 2B, both CO-STAR and POML reached 100% accuracy. POML as a standalone method is therefore competitive, but with higher latency. For routing, that means CO-STAR remains the more practical choice. For models above 2B parameters, I recommend experimenting with both methods for different use cases.

POML+CO-STAR consistently reduced performance

Combining both formats in one prompt did not work compared with the best standalone variant. Examples:

- Qwen 3.5 2B: CO-STAR 100% -> POML+CO-STAR 63.64%

- Granite 4.0 H 1B: CO-STAR 93.94% -> POML+CO-STAR 69.70%

- Marco-Nano-Instruct: baseline/CO-STAR 90.91% -> POML+CO-STAR 27.27%

For a short label-only classification task, the combination adds too much structural complexity. This does not mean the combination is generally bad for other task types, but for routing it did not work.

Note on Gemma 4 26B A4B

Gemma 4 26B A4B is a reasoning model. In the default configuration it returned 100% invalid outputs because the final label was inside the reasoning block rather than message.content. After setting thinking_budget_tokens=0, both baseline and CO-STAR reached 100% accuracy, with baseline being faster (1,008.5 ms). This is an important practical point: reasoning models require explicit inference-mode settings for routing tasks, otherwise they are unusable regardless of their capabilities. This model is not suitable for an integrated graphics card, so inference was performed on a dedicated RX 6800 GPU.

Practical recommendations

| Scenario | Model | Prompt | Note |

|---|---|---|---|

| Fastest prefilter | Granite 4.0 350M Q4_K_M | Baseline | only medium accuracy, useful for cascades |

| Best compromise below 1B | Qwen 3.5 0.8B Q4_K_M | CO-STAR | stable result across multiple runtime versions |

| Granite-family choice | Granite 4.0 H 1B Q4_K_M | CO-STAR | high accuracy, no invalid outputs |

| Best small router | Qwen 3.5 2B Q4_K_M | CO-STAR | 100% accuracy and lower latency than the 3B reference |

Limits of this benchmark

The results are promising, but it is important to be precise about what this benchmark measures and what it does not:

- The dataset has 33 cases and 6 classes. That is suitable for a quick local experiment, but weak for definitive public conclusions.

- Each model was run with

repetitions=1. Withtemperature=0, this reduces volatility, but it does not test robustness against runtime variability. - The benchmark evaluates exact-match labels. A model that returns a different format or an explanation is penalized as invalid. That is correct for a router, but it does not measure general capabilities.

- Some zero results (Granite 4.0 1B, MiniCPM-S-1B) are probably compatibility problems, not proof of general weakness.

- RAM/VRAM footprint, energy consumption, and CPU-only mode were not measured.

For more robust conclusions, the next steps would be a larger and more balanced dataset, bootstrap confidence intervals, per-class recall, and repeated CPU-only runs for portable devices.

Conclusion

The most interesting finding is not which model won. The more important point is that small models behave qualitatively differently depending on size, and a prompting strategy that works for a 2B model can actively hurt a 270M model.

Below 500M parameters: a simple baseline prompt is usually optimal. Added structure increases cognitive load more than it helps.

Around 0.8-1B: CO-STAR starts to become effective. The model has enough capacity for the instruction format, but not yet for more complex structures.

Around 2B: CO-STAR and POML reach comparable accuracy. For routing with minimal latency, CO-STAR is more practical.

Local inference is therefore not just a question of how many tokens per second a model can generate. It is a question of how small a model can be while still reliably holding the instruction, output format, and decision boundary between similar classes.